In today’s data-driven world, businesses are constantly seeking innovative ways to manage, store, and analyze their ever-growing volumes of information. Enter the data lakehouse—a revolutionary architectural approach that bridges the gap between traditional data lakes and data warehouses. By combining the best of both universes, the information lakehouse offers a bound together arrangement for dealing with organized, semi-structured, and unstructured information, all whereas conveying tall execution and taken a toll effectiveness. In this web journal, we’ll plunge into what a information lakehouse is, why it’s picking up footing, and how it’s changing information administration for associations around the world.

What is a Data Lakehouse?

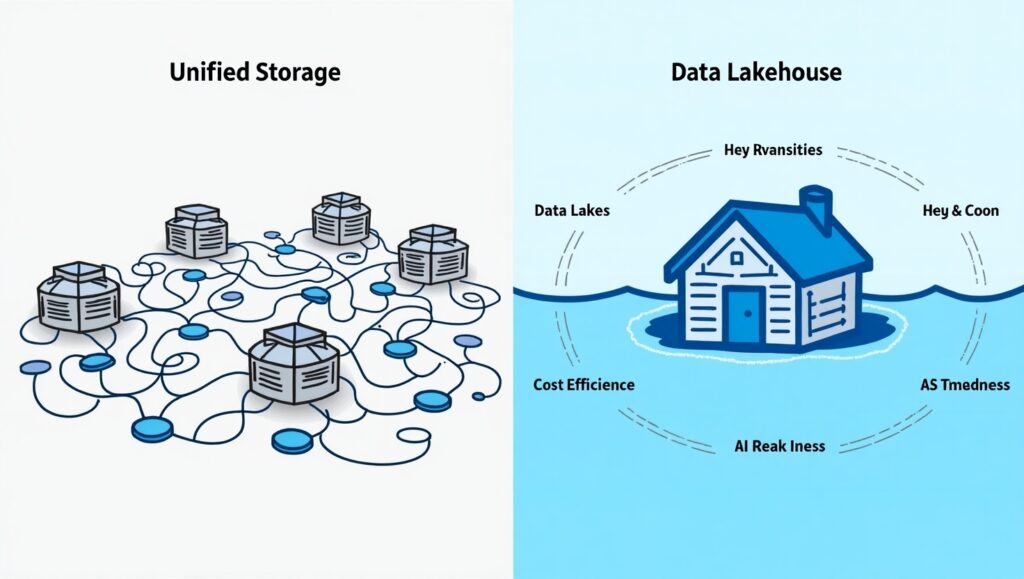

At its core, a data lakehouse integrates the strengths of data lakes and data warehouses. Data warehouses have long been the go-to for structured data, offering robust data management features and optimized query performance. However, they often fall short when it comes to scalability and handling diverse data types. On the other hand, information lakes give cost-effective capacity and adaptability for crude, unstructured data—but they can need the administration and execution required for progressed analytics.

The data lakehouse solves these challenges by merging the two. It gives a single stage that bolsters low-cost capacity (like a information lake) whereas keeping up the execution, administration, and unwavering quality of a information distribution center. This makes it perfect for organizations looking to bind together their information methodologies, streamline workflows, and handle assorted workloads—from trade insights to manufactured insights and machine learning.

Why the Buzz Around Data Lakehouses?

The rising interest in this architecture reflects its transformative potential. Companies like Databricks, Dremio, Onehouse, and Skypoint Cloud are leading the charge, each bringing unique strengths to the table. Databricks offers a powerful all-in-one platform that leverages Delta Lake for reliability and scalability, making it a favorite among enterprises tackling massive-scale analytics. Dremio shines with its high-performance SQL query engine, simplifying data access without complex ETL processes. Onehouse builds on open-source frameworks like Apache Hudi, appealing to those who value flexibility and interoperability. Meanwhile, Skypoint Cloud focuses on customer-centric data solutions, breaking down silos with its Delta Lake-powered platform.

Even concepts like data smoothing—refining raw data for better quality—are gaining attention as complementary techniques within the lakehouse ecosystem. Together, these innovations highlight a clear shift: organisations are moving away from siloed systems and toward unified architectures that can handle the complexity of modern data needs.

Benefits of a Data Lakehouse

So, why are data lakehouses resonating with so many? Here are some key advantages driving their adoption:

- Unified Storage: A data lakehouse eliminates the need for separate systems by supporting all data types—structured (e.g., CSV, Parquet), semi-structured (e.g., JSON), and unstructured (e.g., images, videos)—in one place.

- Cost Efficiency: By leveraging low-cost cloud storage (like AWS S3 or Azure Data Lake), it reduces the expense of maintaining proprietary warehouse systems.

- Performance: Advanced query engines and open table formats (e.g., Apache Iceberg, Delta Lake) ensure fast analytics, rivaling traditional warehouses.

- Flexibility: Open-source formats like Parquet and Hudi prevent vendor lock-in, allowing businesses to mix and match tools as needed.

- AI and ML Readiness: With native support for data science workflows, lakehouses empower teams to build and deploy machine learning models directly on raw data.

Real-World Impact: Who’s Leading the Charge?

The influence of Databricks, Dremio, Onehouse, and Skypoint Cloud is undeniable. For instance, Databricks powers analytics for giants like Walmart, proving its mettle at scale. Dremio’s focus on self-service analytics and governance appeals to businesses streamlining their workflows. Onehouse caters to those embracing open-source solutions, while Skypoint Cloud delivers actionable insights for customer-focused enterprises. These platforms are shaping how organizations approach data, from startups to global corporations.

Challenges to Consider

Despite its promise, the data lakehouse isn’t without hurdles. Implementing one requires careful planning to avoid data swamps—a common pitfall of ungoverned data lakes. Performance can also dip with extremely large datasets if not optimized properly. However, tools from Databricks, Dremio, and others are tackling these issues with robust governance, automated optimization, and scalable designs.

The Future of Data Management

The data lakehouse is more than a trend—it’s a paradigm shift. As data volumes explode and AI/ML use cases expand in 2025, this architecture is poised to become the backbone of modern data strategies. Whether you’re a data engineer exploring Dremio’s query acceleration, a business leader eyeing Databricks’ unified platform, or a startup intrigued by Onehouse’s open-source approach, the lakehouse offers something for everyone.

If you’re still juggling separate lakes and warehouses, it might be time to dive into the lakehouse revolution. Your data—and your bottom line—will thank you.

More Related AI Read Here